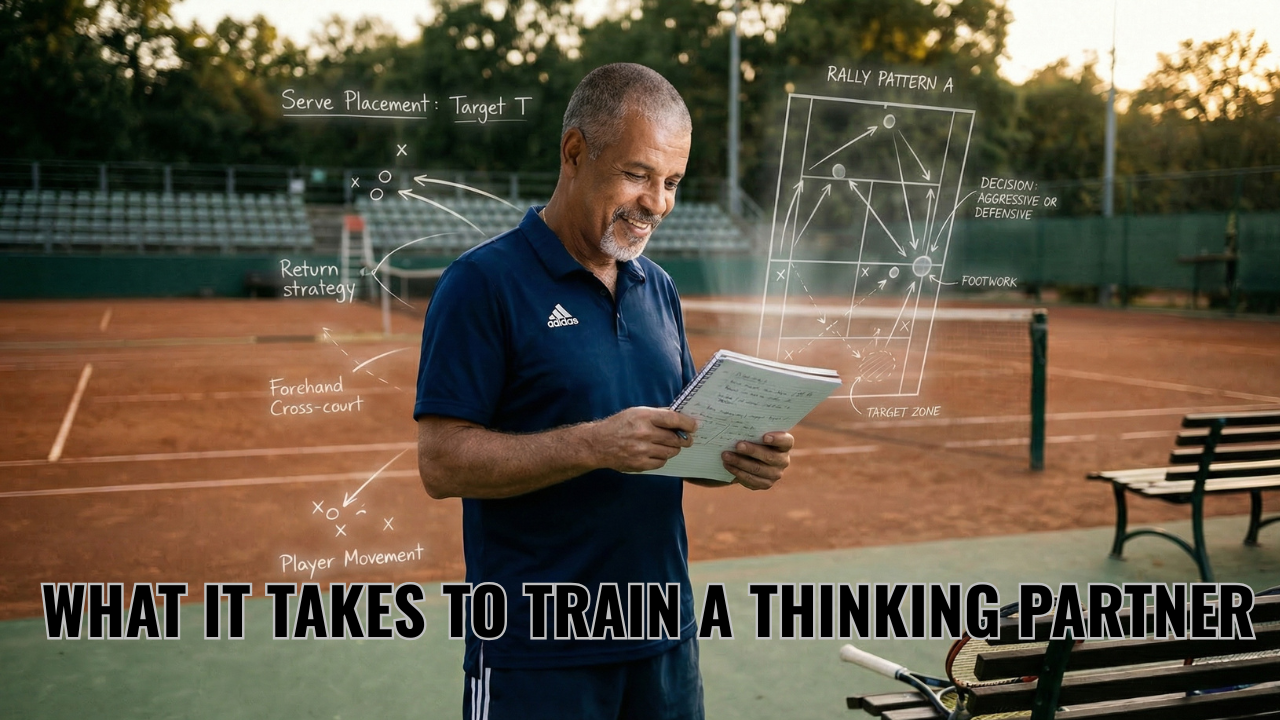

What It Takes to Train a Thinking Partner

Mar 14, 2026

Human to the Power of AI — Essay Four

Artificial intelligence is routinely described as a system that already knows everything. That description is not entirely wrong and almost completely useless. The system may know general patterns, widely shared concepts, and explanations that appear across textbooks and published research in dozens of domains. What it does not know is how a particular coach interprets a practice situation, how a teacher structures a learning progression, or how a parent understands what they are watching from the stands of a junior tennis match. Those details live inside lived experience. They exist in the language people use when describing situations to each other and in the assumptions that guide their decisions without ever being spoken aloud.

Once people understand that the first interaction with a thinking partner is calibration rather than performance, a second realization usually follows: calibration does not happen automatically. Someone has to do the work of introducing the environment.

That requirement surprises people, and the surprise reveals the real gap in how most AI discussions are structured. The conversation almost always focuses on prompting technique. Which words produce better output. How to structure a request so the system responds more precisely. Those questions have limited value. Prompting is simply the mechanism through which context gets introduced. The deeper work is deciding which parts of your thinking architecture are worth introducing in the first place, and then doing the patient work of making that architecture visible to a system that cannot observe it directly.

In mentorship relationships, visibility develops gradually through accumulated exposure. The mentor observes the learner in action, listens to how situations are described, and notices which details are emphasized and which are passed over. They hear a learner attempt to explain what happened and watch the explanation revise itself halfway through a sentence because the telling has exposed something the learner had not fully examined before speaking. The mentor asks about a detail that seemed insignificant at first but turns out to change the entire interpretation of what occurred. Over time those exchanges build a picture that is more specific and more useful than any single conversation could produce. The mentor begins to understand not only what the learner did but how they were seeing the situation while it was unfolding. Once that understanding exists, the questions become sharper and more direct. The mentor can point at the assumption that shaped the decision rather than asking broad exploratory questions that circle the outside of the problem.

Artificial intelligence cannot gather that context through observation. It cannot stand at the side of a court watching a session unfold, cannot sit in the back of a classroom noticing how a particular student processes confusion, cannot read the room in the way that experienced mentors learn to do over years of deliberate attention. What it can do is absorb descriptions of those environments when someone takes the time to articulate them with precision. That articulation is the step most people skip, which is why early interactions feel generic. They ask for help without first explaining the structure of the work. The request may be clear in isolation, but the system responds with something plausible rather than specific because the environment that would give the request meaning has never been introduced.

Consider what happens at the beginning of a serious mentorship conversation. A coach arrives and asks what they should have done differently in a situation that just occurred. A good mentor does not answer that question immediately. They ask about the context that shaped the moment. What was the player trying to accomplish before the decision was made. What had happened earlier in the session that created the conditions for that situation. What options were actually visible from where the coach was standing. Without those details the question cannot be examined usefully, because the situation exists inside an environment the mentor cannot yet see. The question that sounded specific turns out to be abstract until the environment is made visible.

A thinking partner built on artificial intelligence requires the same introduction, but it cannot prompt that introduction through the natural friction of a real conversation. The environment must be described deliberately. The frameworks that shape how situations are interpreted must be made explicit. The language that defines the work, including the distinctions that experienced practitioners make automatically and beginners have not yet learned to see, must be articulated rather than assumed. This is slow work and it is not glamorous. It is also the work that separates a thinking partnership that produces insight from an interaction that produces plausible-sounding noise.

The coaching world offers a clear illustration of what changes once that framework exists. Two coaches can watch the same rally and construct entirely different accounts of what happened. One notices the mechanics of the swing. Another notices the decision that preceded it. A third notices the tactical pattern several exchanges earlier that created the conditions for the decision in the first place. Each coach is interpreting the same physical event through a framework built from years of deliberate observation. None of them is wrong. They are operating inside different layers of the same environment, and which layer they work inside shapes every question they are capable of asking.

If an AI thinking partner is asked to analyze that rally without any understanding of which framework the coach operates inside, its response will miss the mark even when it is technically accurate. It may describe something observable and irrelevant simultaneously. Once the framework has been introduced, the conversation changes completely. The coach can describe what happened and receive questions that probe the decision rather than the technique, the tactical context rather than the isolated shot, the pattern across several exchanges rather than the outcome of one. The thinking partner has learned how the environment is being read, and that learning changes the quality of every subsequent question it is capable of asking.

This is the point where the promise of artificial intelligence begins to look less like automation and more like something that resembles mentorship architecture. The system does not replace the years of observation that allowed the framework to develop. It cannot substitute for the accumulated experience of watching players operate under pressure or the time spent noticing how students struggle with specific ideas at specific stages of development. That accumulated experience is the raw material of judgment and it cannot be shortcut. What artificial intelligence can preserve is the structure that experience created. Once the architecture of someone's thinking has been introduced and refined through deliberate exchange, it does not erode between sessions. The frameworks are retained. The language is retained. The questions remain aligned with the environment that shaped them, and they remain available at the moments when internal reflection is least reliable because pressure has compressed the space that reflection requires.

The environments responsible for developing judgment in young people are still largely treating this possibility as a data problem. The early conversation in sport concentrates on physical performance metrics, technique analysis, and competitive pattern recognition. Those applications sit at the surface of the work. The layer where interpretation and reflection actually shape development has always depended on mentors who ask better questions, and that layer is where the possibility introduced by AI thinking partnerships is most significant and least explored. The architecture of a skilled mentor's questioning can be preserved, made continuously available, and applied to new situations long after the original relationship has ended or the mentor's attention has moved elsewhere. That is a genuinely new structural possibility. Taking it seriously requires something that has always been required at the beginning of every serious mentorship relationship: the willingness to do the slow, deliberate work of making your own thinking visible before expecting someone else to help you examine it.

Next: What happens when thinking partnerships become permanent architecture rather than temporary relationships.

Never Miss a Moment

Join the mailing list to ensure you stay up to date on all things real.

I hate SPAM too. I'll never sell your information.