What Scales Is Not What Works

May 05, 2026

Tuesday — May 5

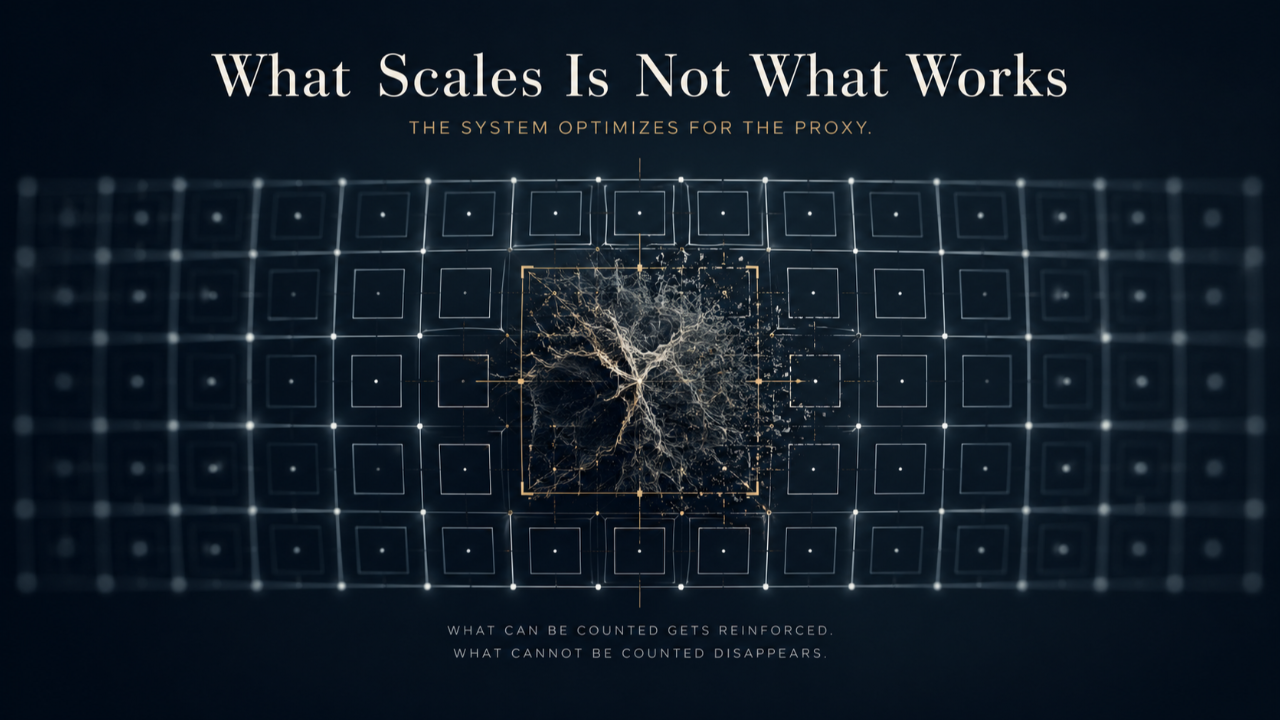

There is an assumption embedded in almost every institution we trust, and it operates below the level where decisions are examined. If something works, it can be scaled. If it can be scaled, it can be made more consistent. If it can be made more consistent, it becomes the model others follow. Over time, that model becomes the standard, and once something becomes the standard, the question of whether it still works is one the institution itself is structurally prohibited from asking. This is not how institutions fail in dramatic ways. It is how they evolve quietly into something different from what they set out to be, without anyone inside them having made a decision to change course.

The substitution happens through a sequence of small optimizations that each feel reasonable in isolation. Each step makes the system more efficient, more predictable, and easier to measure. Each step brings clarity to what was previously ambiguous, and clarity is usually worth trading for. The problem is not with any individual step. It is with what accumulates across all of them, because each step requires choosing what to include and what to leave out. In the early stages of this process, what gets left out is genuinely peripheral, and the system becomes stronger as a result. In later stages, what gets left out is increasingly central, and the system continues to appear strong while the thing it was designed to develop has begun to erode.

A metric is not the thing it was built to measure. It is a representation of the thing, close enough to the original that it can stand in for it during the early phases of a system's development. When the signal and the substance are near each other, the gap between them is inconsequential. Performance scores that track genuine understanding, assessments that proxy for actual competence, outputs that reflect the real quality of what the system produces — in these phases the proxy works because it is essentially accurate. The problem is structural and arrives later, when the system has accumulated enough experience optimizing for the signal that the signal has become easier to manage than the substance itself. The system begins, without deliberating about it, to optimize for the representation rather than the thing the representation was supposed to stand in for, and does so with increasing precision and consistency right up until the original purpose is no longer recoverable from the outputs it produces.

What can be counted gets reinforced. What cannot be counted gets deprioritized, not because anyone decides it matters less, but because the structure used to evaluate progress makes it invisible. Judgment is replaced by decision trees because decision trees can be applied consistently and judgment cannot be fully defined in advance. Understanding is replaced by correct answers because correct answers can be verified and understanding has to be inferred. Adaptability within genuinely novel conditions is replaced by pattern recognition within known constraints because the second can be measured and the first depends on conditions the system cannot reproduce on demand. The system still produces results. In many cases it produces more of them, more consistently, and the external validation that follows continued output confirms to everyone involved that the system is working. That confirmation is the mechanism by which the substitution becomes permanent, because it removes the pressure that would otherwise force the question of whether the results reflect the original purpose or only the current proxies for it.

Scale requires consistency. Consistency requires definition. Definition requires simplification. The moment something is simplified enough to be applied uniformly across a large system, it has already shed part of what made it valuable in the conditions where it first produced meaningful outcomes. This is a structural consequence of the process most institutions use to grow, which means the systems most celebrated for their scalability are frequently the ones most thoroughly detached from the thing they were originally designed to develop. The more successful the system becomes by its own metrics, the harder this is to see from inside it, because the results continue to arrive, the numbers improve, the model expands, and the original question of whether the system is producing what it claims to produce becomes increasingly difficult to ask. Not because anyone is concealing the answer, but because the system no longer has a mechanism capable of generating it.

The environments where this gap is most consequential are the ones where the original purpose was specifically to develop something that cannot be reduced to a pattern, a script, or a repeatable sequence. Human judgment in conditions of genuine uncertainty. The capacity to integrate conflicting information and commit to a decision without complete data. The ability to learn from an experience that was not designed to be instructive. These are capabilities that institutions consistently claim to develop and that their outputs — when examined against the original purpose rather than against the system's own proxies — frequently reveal they have not been developing at all. The gap is not the result of insufficient investment in the system. It is the result of the system having been built around a definition of its purpose that was already simplified enough to scale, which means it was already detached from the purpose before the scaling began.

Most of what passes for reform inside large systems is optimization within the existing framework rather than examination of the framework itself, which is a distinction the system itself cannot make, because making it requires standing outside the structure being evaluated and most institutions have no mechanism for doing that. Building that mechanism is the work that most institutions defer indefinitely, because the system as it exists continues to produce outputs, and outputs are what the institution is being held accountable for. The argument for examining whether those outputs reflect the original purpose is, inside that context, an argument for introducing friction that the system does not currently feel and cannot currently explain as a gain. The friction is the point. The ability to feel it is the thing most scaling processes eliminate first.

Thursday moves into what this examination requires at the level of a single development environment. The problem has the same shape at every level of scale. The constraint is not the size of the system. It is whether there is anything inside it capable of seeing the substitution before it becomes invisible.

Never Miss a Moment

Join the mailing list to ensure you stay up to date on all things real.

I hate SPAM too. I'll never sell your information.